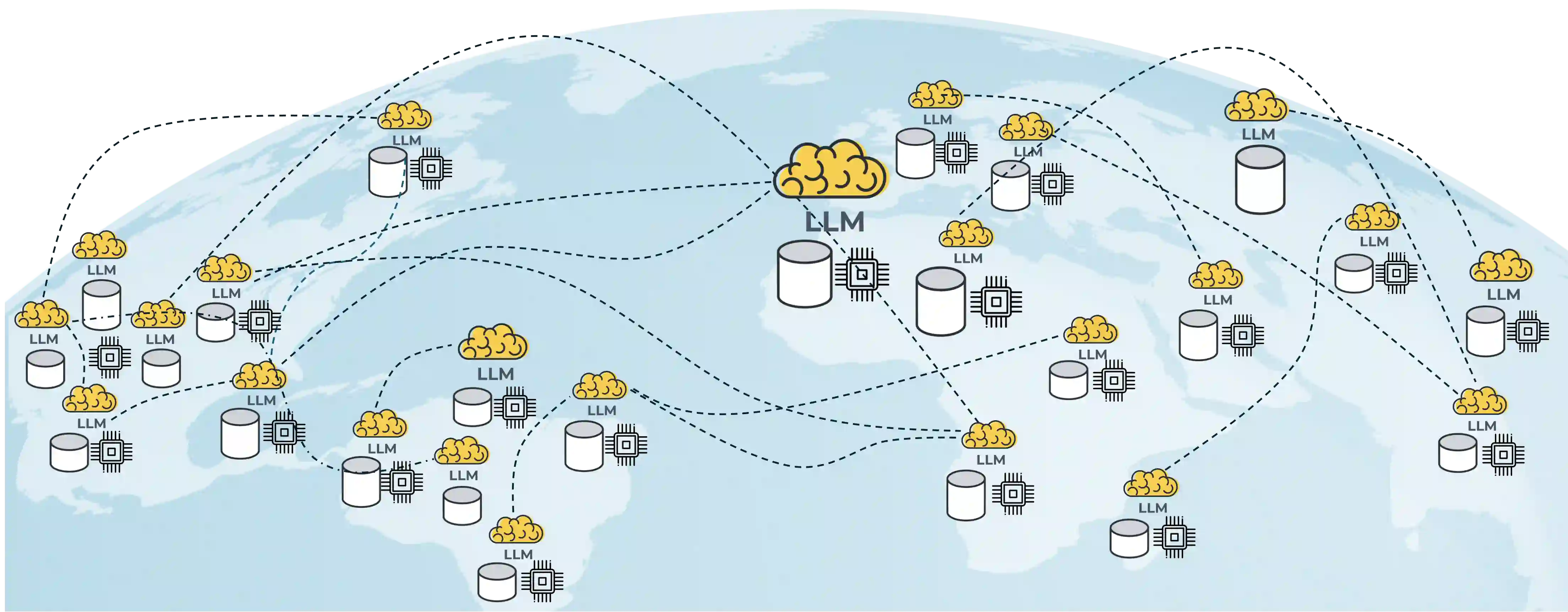

Generative pre-trained large language models (LLMs) have demonstrated impressive performance over a wide range of tasks, thanks to the unprecedented amount of data they have been trained on. As established scaling laws indicate, LLMs' future performance improvement depends on the amount of computing and data sources we can leverage for pre-training. Federated learning (FL) has the potential to unleash the majority of the planet's data and computational resources, which are underutilized by the data-center-focused training methodology of current LLM practice. Our work presents a robust, flexible, reproducible FL approach that enables large-scale collaboration across institutions to train LLMs. This would mobilize more computational and data resources while matching or potentially exceeding centralized performance. We further show the effectiveness of the federated training scales with model size and present our approach for training a billion-scale federated LLM using limited resources. This will help data-rich actors to become the protagonists of LLMs pre-training instead of leaving the stage to compute-rich actors alone.

翻译:生成式预训练大语言模型(LLMs)凭借其前所未有的大规模训练数据,在广泛任务中展现出卓越性能。既有的扩展定律表明,LLMs未来性能的提升取决于预训练可用的算力规模与数据来源。联邦学习(FL)有望释放全球大部分未被充分利用的数据与计算资源——这些资源目前被以数据中心为中心的LLM训练方法所忽视。本研究提出了一种鲁棒、灵活、可复现的联邦学习方法,支持跨机构的大规模协作训练LLM。该方法能调动更多计算与数据资源,同时达到甚至超越集中式训练的性能表现。我们进一步证明联邦训练的有效性随模型规模增长,并展示了在有限资源下训练十亿参数级联邦LLM的方法。这将帮助数据富集型参与者成为LLM预训练的主导力量,而非仅让算力富集者独占舞台。