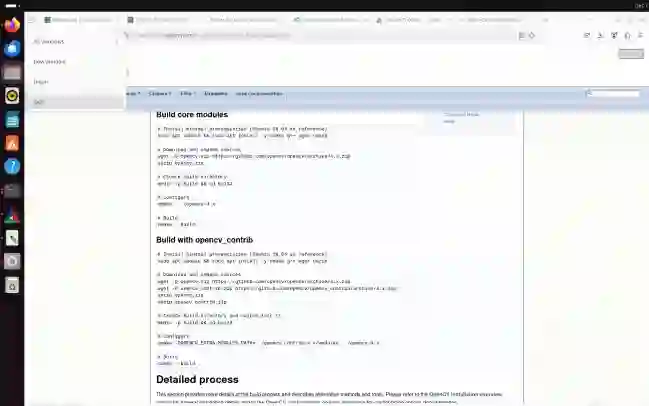

GUI grounding is a critical capability for vision-language models (VLMs) that enables automated interaction with graphical user interfaces by locating target elements from natural language instructions. However, grounding on GUI screenshots remains challenging due to high-resolution images, small UI elements, and ambiguous user instructions. In this work, we propose AdaZoom-GUI, an adaptive zoom-based GUI grounding framework that improves both localization accuracy and instruction understanding. Our approach introduces an instruction refinement module that rewrites natural language commands into explicit and detailed descriptions, allowing the grounding model to focus on precise element localization. In addition, we design a conditional zoom-in strategy that selectively performs a second-stage inference on predicted small elements, improving localization accuracy while avoiding unnecessary computation and context loss on simpler cases. To support this framework, we construct a high-quality GUI grounding dataset and train the grounding model using Group Relative Policy Optimization (GRPO), enabling the model to predict both click coordinates and element bounding boxes. Experiments on public benchmarks demonstrate that our method achieves state-of-the-art performance among models with comparable or even larger parameter sizes, highlighting its effectiveness for high-resolution GUI understanding and practical GUI agent deployment.

翻译:图形用户界面(GUI)定位是视觉语言模型(VLMs)的一项关键能力,它使得模型能够通过自然语言指令定位目标元素,从而实现与图形用户界面的自动化交互。然而,由于高分辨率图像、微小的用户界面元素以及模糊的用户指令,在GUI截图上进行定位仍然具有挑战性。在本工作中,我们提出了AdaZoom-GUI,一种基于自适应缩放的GUI定位框架,旨在同时提升定位精度和指令理解能力。我们的方法引入了一个指令优化模块,该模块将自然语言命令重写为明确且详细的描述,使得定位模型能够专注于精确的元素定位。此外,我们设计了一种条件性放大策略,该策略选择性地对预测出的小型元素进行第二阶段推理,从而在提升定位精度的同时,避免在简单案例上进行不必要的计算和上下文信息丢失。为了支持该框架,我们构建了一个高质量的GUI定位数据集,并使用组相对策略优化(GRPO)方法训练定位模型,使模型能够同时预测点击坐标和元素边界框。在公开基准测试上的实验表明,我们的方法在参数量相当甚至更大的模型中取得了最先进的性能,突显了其在高分辨率GUI理解和实用GUI智能体部署方面的有效性。