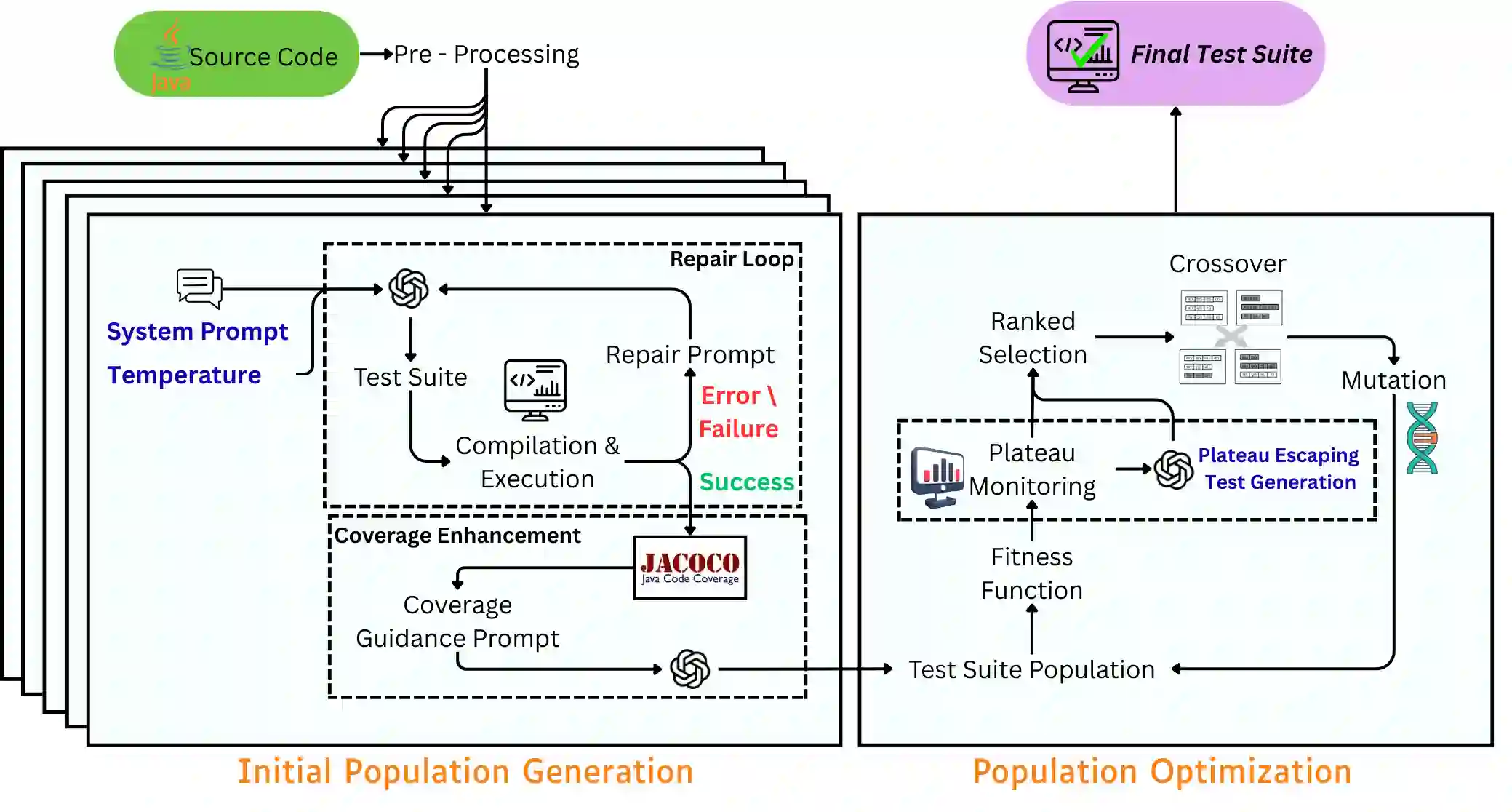

Search-Based Software Testing (SBST) is a well-established approach for automated unit test generation, yet it often suffers from premature convergence and limited diversity in the generated test suites. Recently, Large Language Models (LLMs) have emerged as an alternative technique for unit test generation. We present EvoGPT, a hybrid test generation system that integrates LLM-based test generation with SBST-based test suite optimization. EvoGPT uses LLMs to generate an initial population of test suites, and uses an Evolutionary Algorithm (EA) to further optimize this test suite population. A distinguishing feature of EvoGPT is its explicit enforcement of diversity, achieved through the use of multiple temperatures and prompt instructions during test generation. In addition, each LLM-generated test is refined using a generation-repair loop and coverage-guided assertion generation. To address evolutionary plateaus, EvoGPT also detects stagnation during search and injects additional LLM-generated tests aimed at previously uncovered branches. Here too diversity is enforced using multiple temperatures and prompt instructions. We evaluate EvoGPT on Defects4J, a standard benchmark for test generation. The results show that EvoGPT achieves, on average, a 10\% improvement in both code coverage and mutation score metrics compared to TestART, an LLM-only baseline; and EvoSuite, a standard SBST baseline. An ablation study indicates that explicitly enforcing diversity both at initialization and during the search is key to effectively leveraging LLMs for automated unit test generation.

翻译:基于搜索的软件测试(SBST)是一种成熟的自动化单元测试生成方法,但其生成的测试套件常面临早熟收敛和多样性受限的问题。近期,大型语言模型(LLMs)已成为单元测试生成的替代技术。本文提出EvoGPT,一种融合基于LLM的测试生成与基于SBST的测试套件优化的混合测试生成系统。EvoGPT使用LLMs生成初始测试套件种群,并采用进化算法(EA)对该种群进行进一步优化。EvoGPT的显著特征是通过在测试生成阶段使用多温度参数和多样化提示指令,显式地增强多样性。此外,每个LLM生成的测试均通过生成-修复循环和覆盖率引导的断言生成进行精炼。针对进化停滞问题,EvoGPT在搜索过程中检测平台期,并注入针对先前未覆盖分支的额外LLM生成测试。此过程同样通过多温度参数和提示指令强制保持多样性。我们在测试生成标准基准Defects4J上评估EvoGPT。实验结果表明,相较于纯LLM基线TestART和标准SBST基线EvoSuite,EvoGPT在代码覆盖率和变异分数指标上平均提升10%。消融研究表明,在初始化和搜索过程中显式增强多样性,是有效利用LLMs进行自动化单元测试生成的关键。