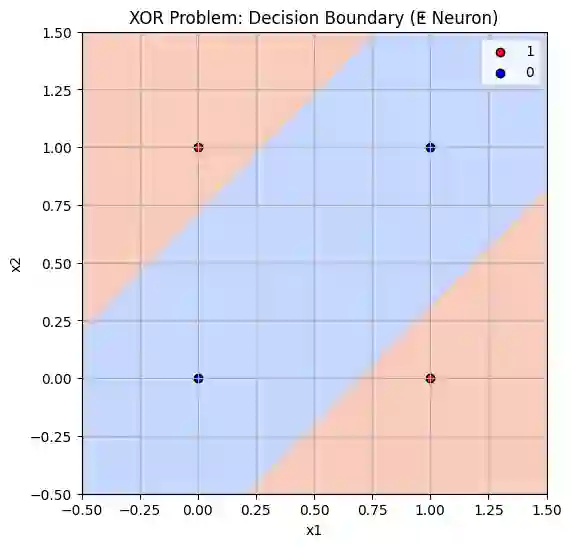

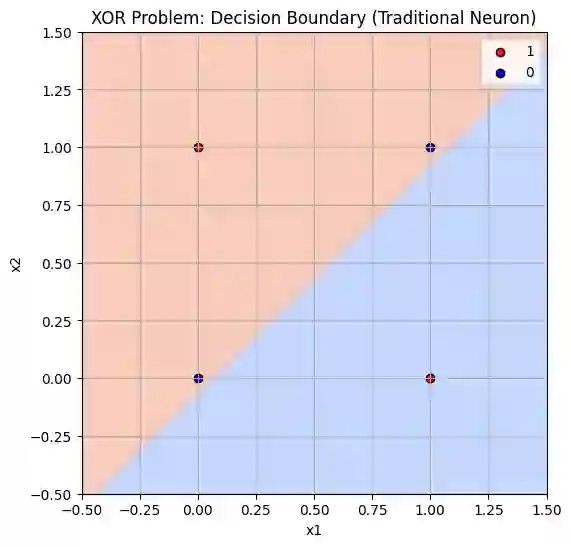

We introduce a yat-product-powered neural network, the Neural Matter Network (NMN), a breakthrough in deep learning that achieves non-linear pattern recognition without activation functions. Our key innovation relies on the yat-product and yat-product, which naturally induces non-linearity by projecting inputs into a pseudo-metric space, eliminating the need for traditional activation functions while maintaining only a softmax layer for final class probability distribution. This approach simplifies network architecture and provides unprecedented transparency into the network's decision-making process. Our comprehensive empirical evaluation across different datasets demonstrates that NMN consistently outperforms traditional MLPs. The results challenge the assumption that separate activation functions are necessary for effective deep-learning models. The implications of this work extend beyond immediate architectural benefits, by eliminating intermediate activation functions while preserving non-linear capabilities, yat-MLP establishes a new paradigm for neural network design that combines simplicity with effectiveness. Most importantly, our approach provides unprecedented insights into the traditionally opaque "black-box" nature of neural networks, offering a clearer understanding of how these models process and classify information.

翻译:我们介绍了一种基于yat积的神经网络,即神经物质网络(Neural Matter Network, NMN),这是深度学习领域的一项突破,它无需激活函数即可实现非线性模式识别。我们的核心创新依赖于yat积,它通过将输入投影到一个伪度量空间中,自然地引入了非线性,从而消除了对传统激活函数的需求,同时仅保留一个softmax层用于最终的类别概率分布。这种方法简化了网络架构,并为网络的决策过程提供了前所未有的透明度。我们在不同数据集上进行全面实证评估,结果表明NMN始终优于传统的多层感知机(MLP)。这些结果挑战了“有效的深度学习模型需要独立的激活函数”这一假设。这项工作的意义超越了即时的架构优势:通过消除中间激活函数同时保留非线性能力,yat-MLP确立了一种结合简洁性与有效性的神经网络设计新范式。最重要的是,我们的方法为传统上不透明的神经网络“黑箱”本质提供了前所未有的洞察,从而更清晰地理解了这些模型如何处理和分类信息。