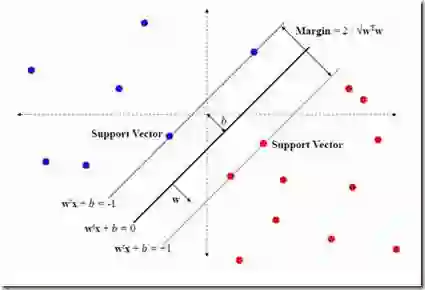

Federated learning is a distributed collaborative machine learning paradigm that has gained strong momentum in recent years. In federated learning, a central server periodically coordinates models with clients and aggregates the models trained locally by clients without necessitating access to local data. Despite its potential, the implementation of federated learning continues to encounter several challenges, predominantly the slow convergence that is largely due to data heterogeneity. The slow convergence becomes particularly problematic in cross-device federated learning scenarios where clients may be strongly limited by computing power and storage space, and hence counteracting methods that induce additional computation or memory cost on the client side such as auxiliary objective terms and larger training iterations can be impractical. In this paper, we propose a novel federated aggregation strategy, TurboSVM-FL, that poses no additional computation burden on the client side and can significantly accelerate convergence for federated classification task, especially when clients are "lazy" and train their models solely for few epochs for next global aggregation. TurboSVM-FL extensively utilizes support vector machine to conduct selective aggregation and max-margin spread-out regularization on class embeddings. We evaluate TurboSVM-FL on multiple datasets including FEMNIST, CelebA, and Shakespeare using user-independent validation with non-iid data distribution. Our results show that TurboSVM-FL can significantly outperform existing popular algorithms on convergence rate and reduce communication rounds while delivering better test metrics including accuracy, F1 score, and MCC.

翻译:联邦学习是一种分布式协作机器学习范式,近年来发展势头强劲。在联邦学习中,中央服务器定期与客户端协调模型,并聚合客户端本地训练的模型,而无需访问本地数据。尽管潜力巨大,但联邦学习的实施仍面临若干挑战,其中最主要的是收敛速度慢,这在很大程度上源于数据异构性。收敛速度慢在跨设备联邦学习场景中尤为突出,因为客户端可能受到计算能力和存储空间的严重限制,因此,那些在客户端引入额外计算或内存开销的对抗方法(如辅助目标项和更大的训练迭代次数)可能不切实际。本文提出了一种新颖的联邦聚合策略——TurboSVM-FL,该策略不会给客户端带来额外的计算负担,并能显著加速联邦分类任务的收敛,尤其是在客户端"惰性"且仅训练少量轮次便参与下一次全局聚合的情况下。TurboSVM-FL广泛利用支持向量机进行选择性聚合,并对类别嵌入实施最大间隔分散正则化。我们在多个数据集(包括FEMNIST、CelebA和Shakespeare)上,使用非独立同分布数据下的用户无关验证方法对TurboSVM-FL进行了评估。结果表明,TurboSVM-FL在收敛速度上显著优于现有流行算法,减少了通信轮次,同时在准确率、F1分数和MCC等测试指标上表现更优。