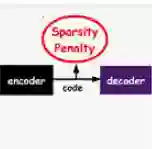

The rapid development of generative AI has transformed content creation, communication, and human development. However, this technology raises profound concerns in high-stakes domains, demanding rigorous methods to analyze and evaluate AI-generated content. While existing analytic methods often treat images as indivisible wholes, real-world AI failures generally manifest as specific visual patterns that can evade holistic detection and suit more granular and decomposed analysis. Here we introduce a content analysis tool, Language-Grounded Sparse Encoders (LanSE), which decompose images into interpretable visual patterns with natural language descriptions. Utilizing interpretability modules and large multimodal models, LanSE can automatically identify visual patterns within data modalities. Our method discovers more than 5,000 visual patterns with 93\% human agreement, provides decomposed evaluation outperforming existing methods, establishes the first systematic evaluation of physical plausibility, and extends to medical imaging settings. Our method's capability to extract language-grounded patterns can be naturally adapted to numerous fields, including biology and geography, as well as other data modalities such as protein structures and time series, thereby advancing content analysis for generative AI.

翻译:生成式AI的快速发展已深刻改变了内容创作、人机交互及人类发展进程。然而,这项技术在关键领域引发了深层担忧,亟需严格的方法来分析评估AI生成内容。现有分析方法通常将图像视为不可分割的整体,但实际AI故障往往表现为特定的视觉模式,这些模式既能规避整体检测,更适合进行细粒度分解分析。本文提出一种内容分析工具——语言锚定稀疏编码器(LanSE),该工具可将图像分解为具有自然语言描述的可解释视觉模式。通过整合可解释性模块与大型多模态模型,LanSE能够自动识别数据模态中的视觉模式。本方法发现了超过5,000种视觉模式,与人类标注的一致性达93%,提供了优于现有方法的分解评估,建立了首个对物理合理性的系统评估体系,并可拓展至医学影像领域。该方法提取语言锚定模式的能力可自然适配生物、地理等多个领域,以及蛋白质结构、时间序列等其他数据模态,从而推动生成式AI内容分析技术的发展。