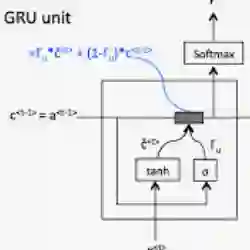

This work explores the use of the AMD Xilinx Versal Adaptable Intelligent Engine (AIE) to accelerate Gated Recurrent Unit (GRU) inference for latency constrained applications. We present a custom workload distribution framework across the AIE's vector processors and propose a hybrid AIE - Programmable Logic (PL) design to optimize computational efficiency. Our approach explores the parallelization over the rows of the matrices by utilizing as many of the AIE vectorized processors effectively computing all the elements of the resulting vector at the same time, an alternative to cascade stream pipelining.

翻译:本研究探讨了利用AMD Xilinx Versal自适应智能引擎(AIE)来加速面向延迟约束应用的门控循环单元(GRU)推理。我们提出了一种在AIE向量处理器间进行定制化工作负载分配的框架,并设计了一种混合AIE-可编程逻辑(PL)架构以优化计算效率。我们的方法通过充分利用AIE向量化处理器并行计算矩阵行,实现结果向量所有元素的同步计算,以此作为级联流流水线的一种替代方案。