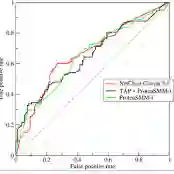

Multiple binary responses arise in many modern data-analytic problems. Although fitting separate logistic regressions for each response is computationally attractive, it ignores shared structure and can be statistically inefficient, especially in high-dimensional and class-imbalanced regimes. Low-rank models offer a natural way to encode latent dependence across tasks, but existing methods for binary data are largely likelihood-based and focus on pointwise classification rather than ranking performance. In this work, we propose a unified framework for learning with multiple binary responses that directly targets discrimination by minimizing a surrogate loss for the area under the ROC curve (AUC). The method aggregates pairwise AUC surrogate losses across responses while imposing a low-rank constraint on the coefficient matrix to exploit shared structure. We develop a scalable projected gradient descent algorithm based on truncated singular value decomposition. Exploiting the fact that the pairwise loss depends only on differences of linear predictors, we simplify computation and analysis. We establish non-asymptotic convergence guarantees, showing that under suitable regularity conditions, leading to linear convergence up to the minimax-optimal statistical precision. Extensive simulation studies demonstrate that the proposed method is robust in challenging settings such as label switching and data contamination and consistently outperforms likelihood-based approaches.

翻译:在现代数据分析问题中,多重二元响应频繁出现。尽管为每个响应单独拟合逻辑回归在计算上具有吸引力,但这种方法忽略了共享结构,且在统计效率上存在不足,尤其在高维和类别不平衡场景下更为明显。低秩模型为编码任务间的潜在依赖性提供了一种自然方式,但现有的二元数据方法主要基于似然估计,并侧重于逐点分类而非排序性能。本研究提出一个面向多重二元响应的统一学习框架,该框架通过最小化受试者工作特征曲线下面积(AUC)的代理损失函数,直接针对判别性能进行优化。该方法在响应间聚合成对AUC代理损失的同时,对系数矩阵施加低秩约束以利用共享结构。我们基于截断奇异值分解开发了一种可扩展的投影梯度下降算法。通过利用成对损失仅依赖于线性预测变量差值这一特性,我们简化了计算与分析过程。我们建立了非渐近收敛性保证,证明在适当的正则性条件下,算法能以线性收敛速度达到极小极大最优统计精度。大量仿真研究表明,所提方法在标签切换和数据污染等挑战性场景中具有鲁棒性,且持续优于基于似然估计的方法。