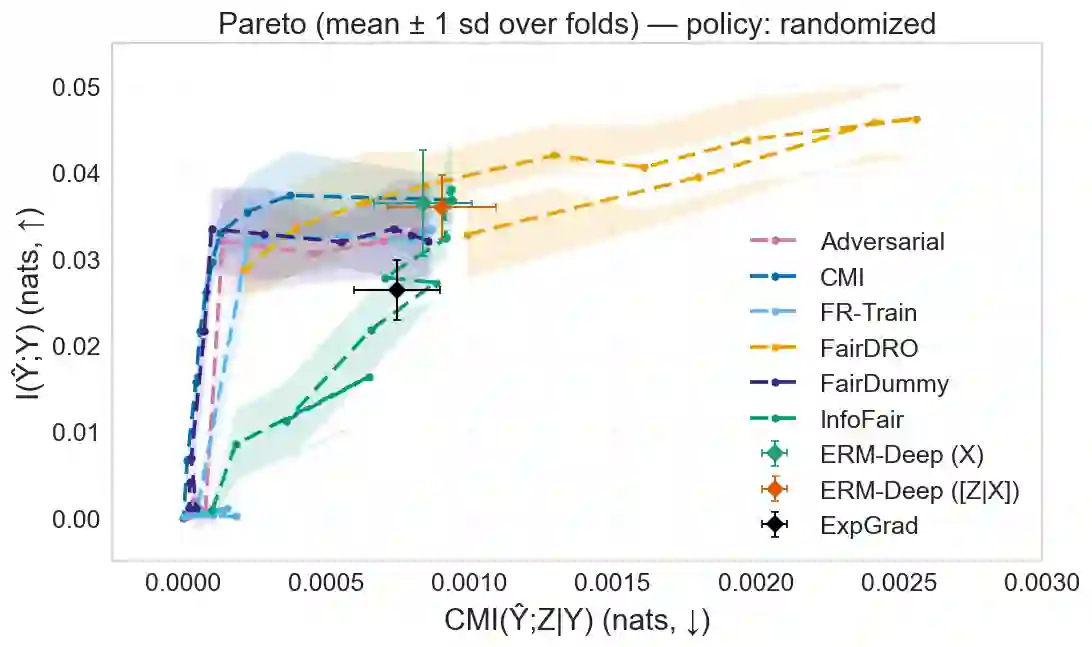

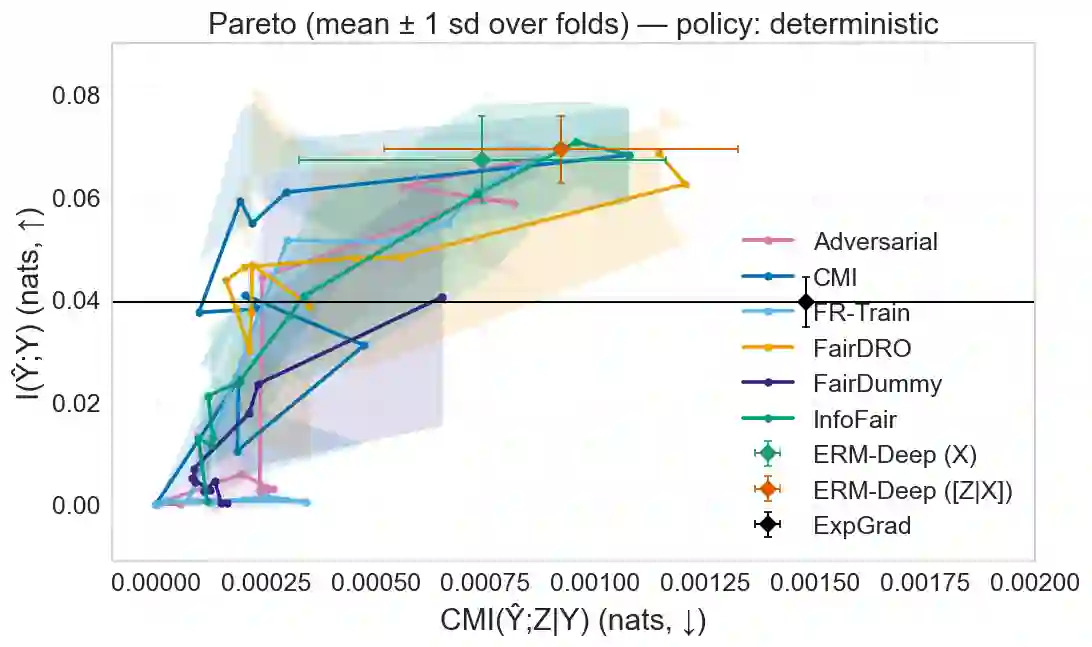

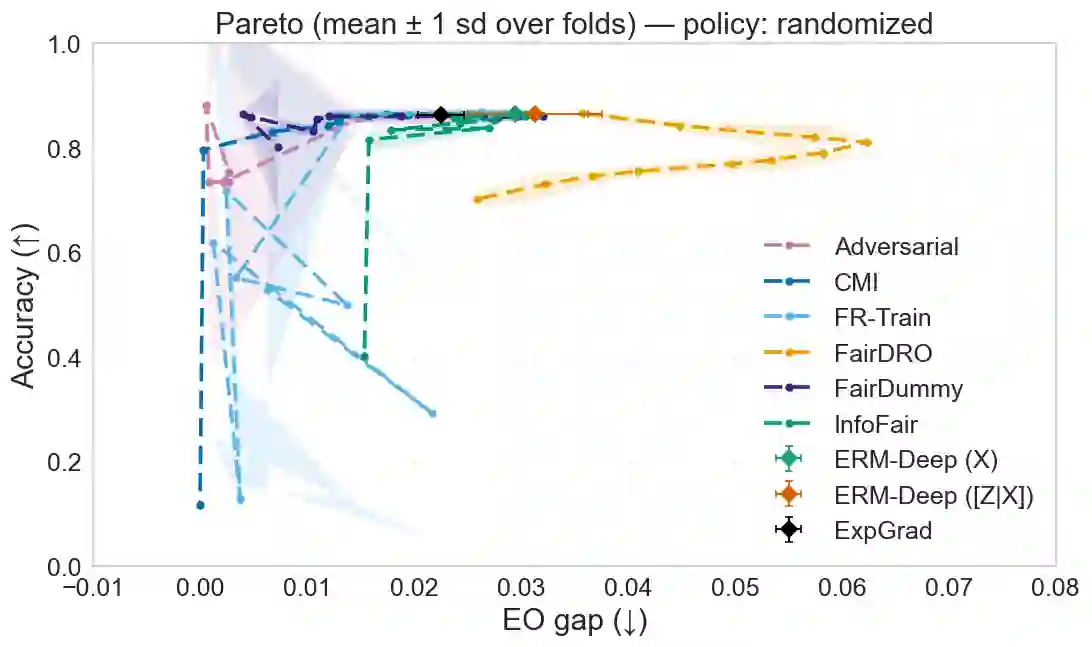

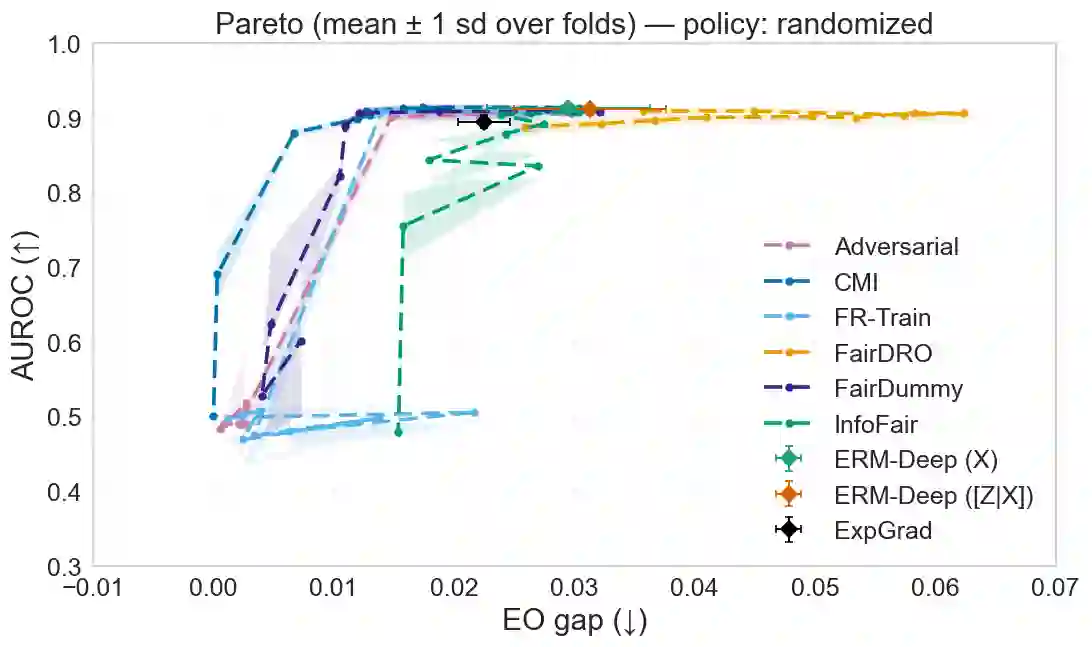

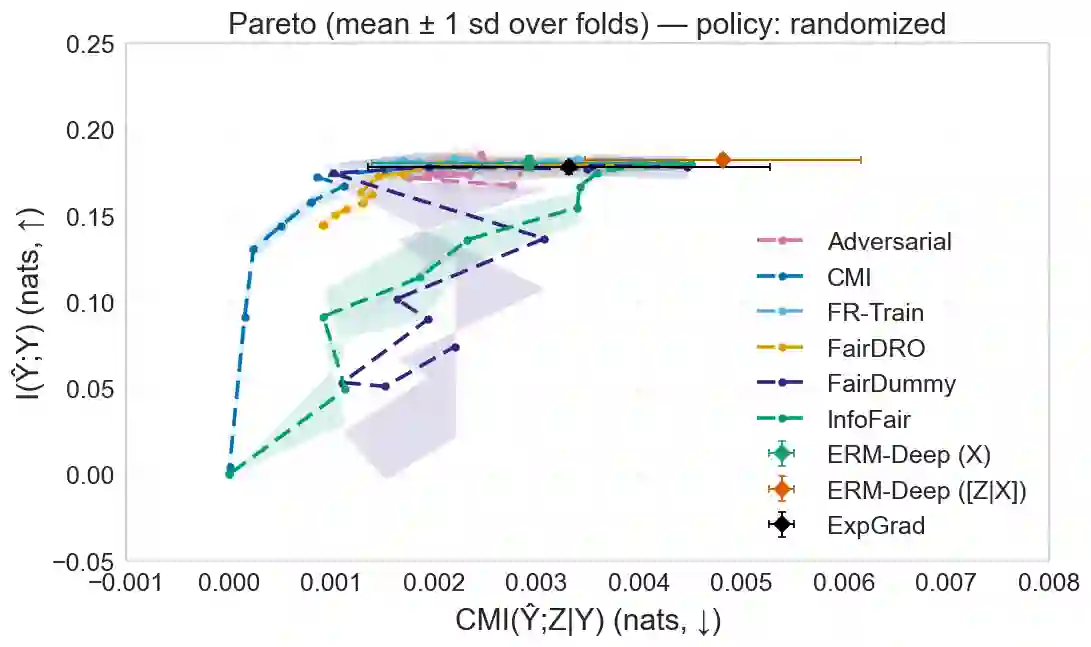

We study the Pareto frontier (optimal trade-off) between utility and separation, a fairness criterion requiring predictive independence from sensitive attributes conditional on the true outcome. Through an information-theoretic lens, we prove a characterization of the utility-separation Pareto frontier, establish its concavity, and thereby prove the increasing marginal cost of separation in terms of utility. In addition, we characterize the conditions under which this trade-off becomes strict, providing a guide for trade-off selection in practice. Based on the theoretical characterization, we develop an empirical regularizer based on conditional mutual information (CMI) between predictions and sensitive attributes given the true outcome. The CMI regularizer is compatible with any deep model trained via gradient-based optimization and serves as a scalar monitor of residual separation violations, offering tractable guarantees during training. Finally, numerical experiments support our theoretical findings: across COMPAS, UCI Adult, UCI Bank, and CelebA, the proposed method substantially reduces separation violations while matching or exceeding the utility of established baseline methods. This study thus offers a provable, stable, and flexible approach to enforcing separation in deep learning.

翻译:我们研究了效用与分离之间的帕累托前沿(最优权衡),其中分离是一种公平性准则,要求在给定真实结果的条件下,预测独立于敏感属性。通过信息论的视角,我们证明了效用-分离帕累托前沿的一个刻画,确立了其凹性,从而证明了分离在效用方面的边际成本递增。此外,我们刻画了这种权衡变得严格的条件,为实践中的权衡选择提供了指导。基于这一理论刻画,我们开发了一种基于预测与敏感属性在给定真实结果下的条件互信息(CMI)的经验正则化器。该CMI正则化器兼容任何通过基于梯度的优化训练的深度模型,并可作为残余分离违规的标量监控器,在训练过程中提供可处理的保证。最后,数值实验支持了我们的理论发现:在COMPAS、UCI Adult、UCI Bank和CelebA数据集上,所提出的方法在匹配或超越现有基线方法效用的同时,显著减少了分离违规。因此,本研究为在深度学习中实施分离提供了一种可证明、稳定且灵活的方法。