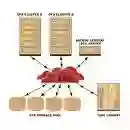

Graph Neural Networks (GNNs) have demonstrated strong capabilities in processing structured data. While traditional GNNs typically treat each feature dimension equally during graph convolution, we raise an important question: Is the graph convolution operation equally beneficial for each feature? If not, the convolution operation on certain feature dimensions can possibly lead to harmful effects, even worse than the convolution-free models. In prior studies, to assess the impacts of graph convolution on features, people proposed metrics based on feature homophily to measure feature consistency with the graph topology. However, these metrics have shown unsatisfactory alignment with GNN performance and have not been effectively employed to guide feature selection in GNNs. To address these limitations, we introduce a novel metric, Topological Feature Informativeness (TFI), to distinguish between GNN-favored and GNN-disfavored features, where its effectiveness is validated through both theoretical analysis and empirical observations. Based on TFI, we propose a simple yet effective Graph Feature Selection (GFS) method, which processes GNN-favored and GNN-disfavored features separately, using GNNs and non-GNN models. Compared to original GNNs, GFS significantly improves the extraction of useful topological information from each feature with comparable computational costs. Extensive experiments show that after applying GFS to 8 baseline and state-of-the-art (SOTA) GNN architectures across 10 datasets, 83.75% of the GFS-augmented cases show significant performance boosts. Furthermore, our proposed TFI metric outperforms other feature selection methods. These results validate the effectiveness of both GFS and TFI. Additionally, we demonstrate that GFS's improvements are robust to hyperparameter tuning, highlighting its potential as a universal method for enhancing various GNN architectures.

翻译:图神经网络(GNNs)在处理结构化数据方面展现出强大能力。传统GNNs通常在图卷积过程中对每个特征维度进行同等处理,但我们提出一个重要问题:图卷积操作是否对每个特征都同样有益?若答案是否定的,则对某些特征维度的卷积操作可能产生有害影响,甚至比无卷积模型更差。在先前研究中,为评估图卷积对特征的影响,学者提出了基于特征同质性的度量指标,以衡量特征与图拓扑结构的一致性。然而,这些指标与GNN性能的关联性不尽如人意,且未能有效指导GNN中的特征选择。为突破这些局限,我们提出一种新颖的度量指标——拓扑特征信息量(TFI),用以区分GNN偏好特征与GNN排斥特征,其有效性通过理论分析和实证观察得到验证。基于TFI,我们提出一种简单而有效的图特征选择(GFS)方法,该方法分别使用GNN模型和非GNN模型处理GNN偏好特征与GNN排斥特征。相较于原始GNN,GFS在可比较的计算成本下,显著提升了从每个特征中提取有用拓扑信息的能力。大量实验表明,将GFS应用于10个数据集上的8种基线及前沿(SOTA)GNN架构后,83.75%的GFS增强案例显示出显著的性能提升。此外,我们提出的TFI指标优于其他特征选择方法。这些结果验证了GFS与TFI的有效性。我们还证明GFS的性能提升对超参数调优具有鲁棒性,凸显了其作为增强各类GNN架构的通用方法的潜力。