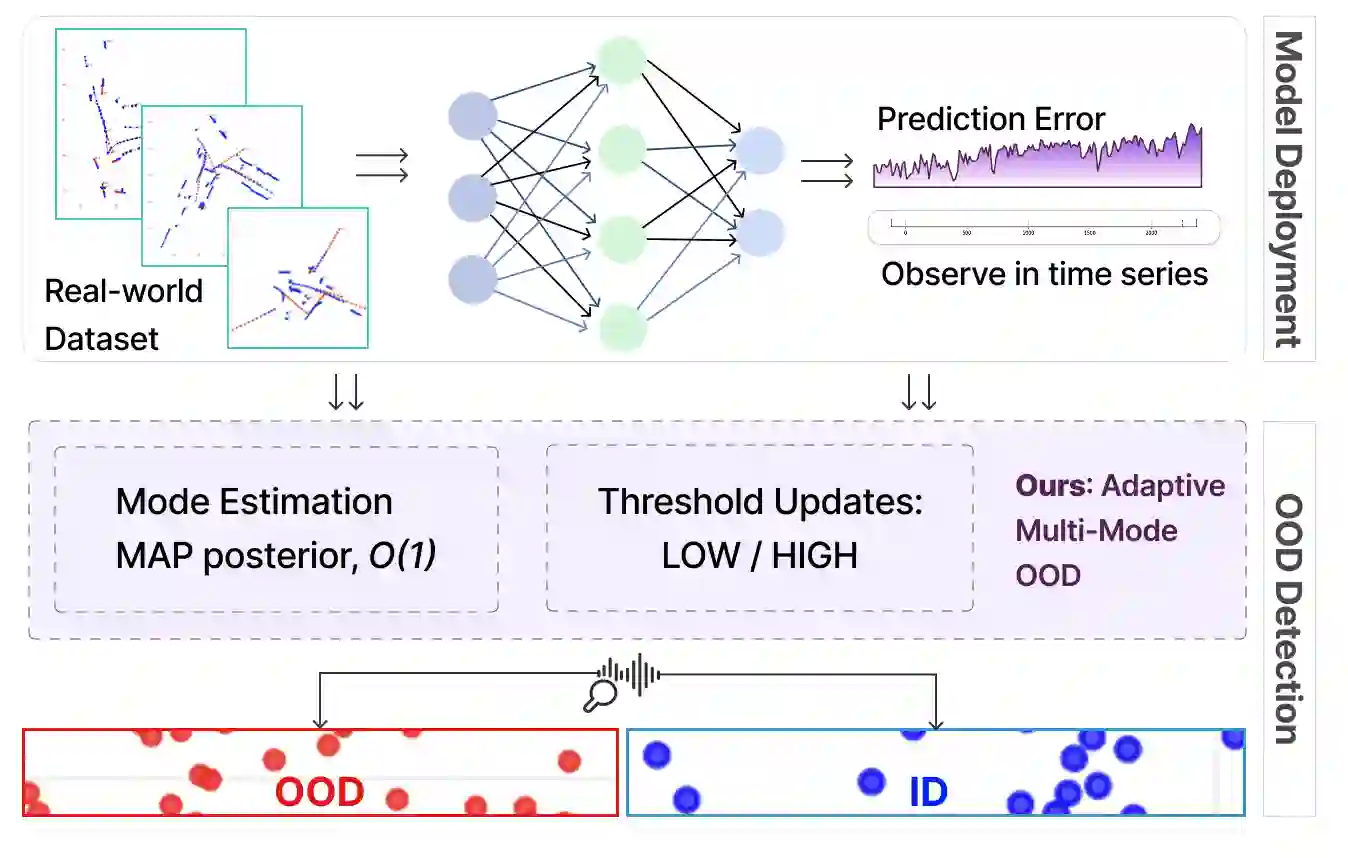

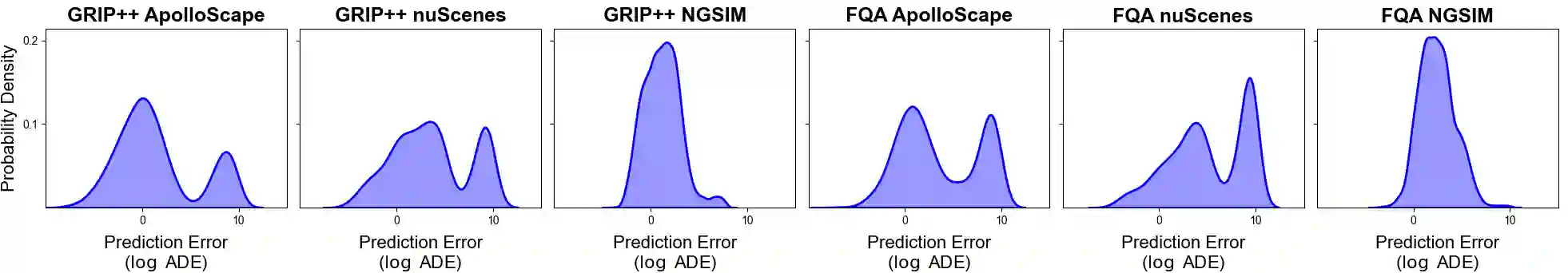

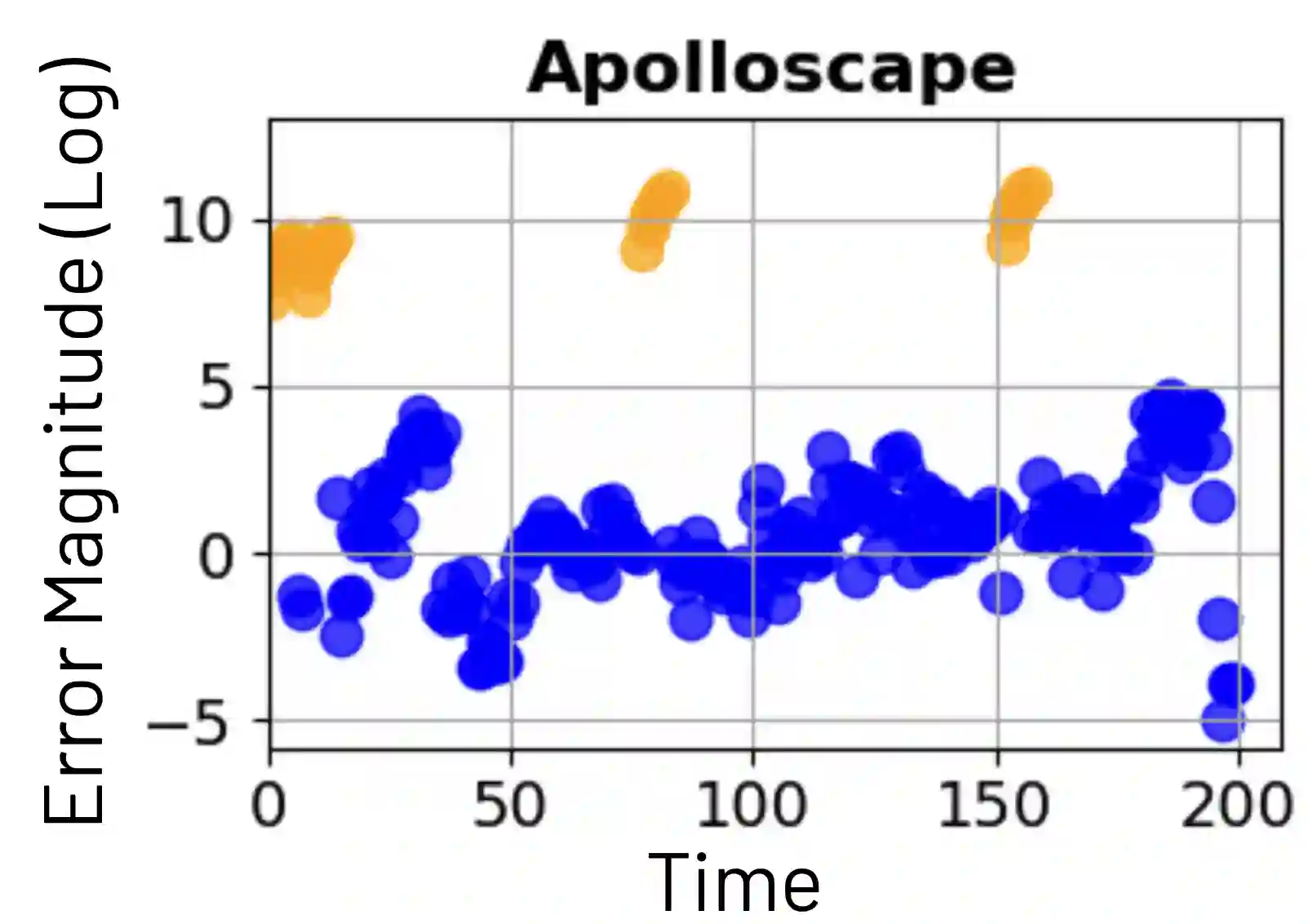

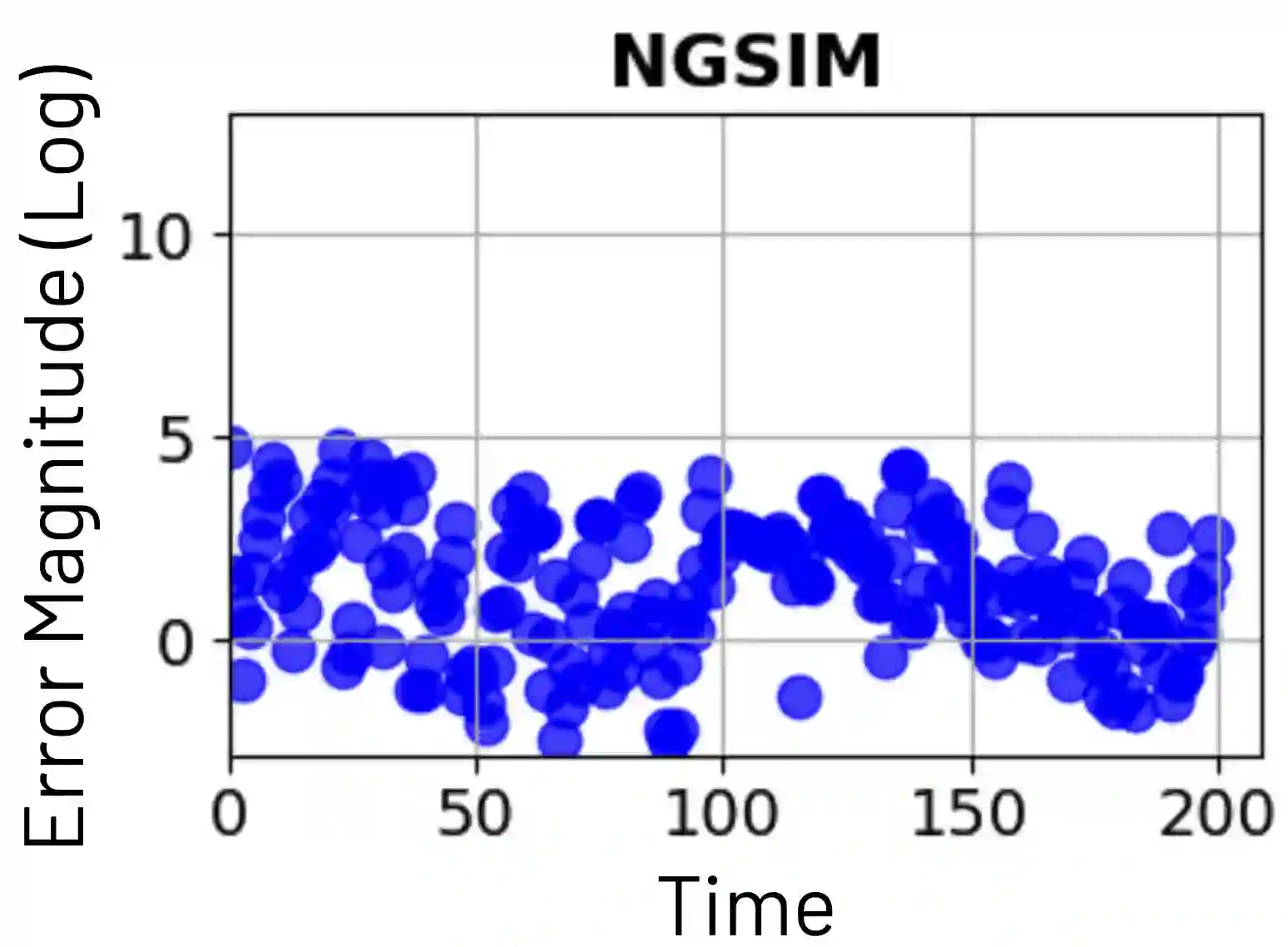

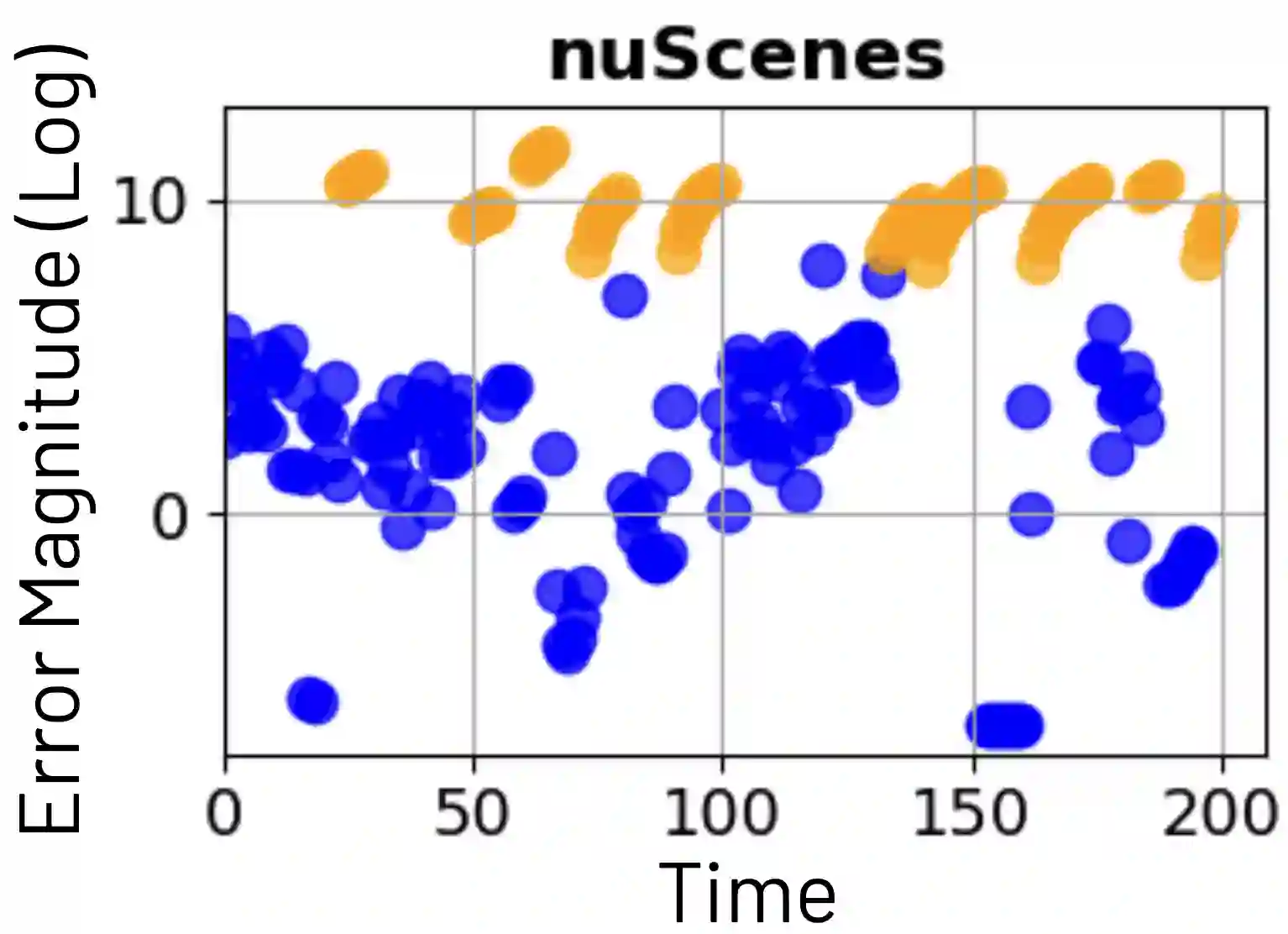

Trajectory prediction is central to the safe and seamless operation of autonomous vehicles (AVs). In deployment, however, prediction models inevitably face distribution shifts between training data and real-world conditions, where rare or underrepresented traffic scenarios induce out-of-distribution (OOD) cases. While most prior OOD detection research in AVs has concentrated on computer vision tasks such as object detection and segmentation, trajectory-level OOD detection remains largely underexplored. A recent study formulated this problem as a quickest change detection (QCD) task, providing formal guarantees on the trade-off between detection delay and false alarms [1]. Building on this foundation, we propose a new framework that introduces adaptive mechanisms to achieve robust detection in complex driving environments. Empirical analysis across multiple real-world datasets reveals that prediction errors -- even on in-distribution samples -- exhibit mode-dependent distributions that evolve over time with dataset-specific dynamics. By explicitly modeling these error modes, our method achieves substantial improvements in both detection delay and false alarm rates. Comprehensive experiments on established trajectory prediction benchmarks show that our framework significantly outperforms prior UQ- and vision-based OOD approaches in both accuracy and computational efficiency, offering a practical path toward reliable, driving-aware autonomy.

翻译:轨迹预测是确保自动驾驶车辆安全平稳运行的核心技术。然而在实际部署中,预测模型不可避免地面临训练数据与真实场景间的分布偏移,其中罕见或代表性不足的交通场景会引发分布外(OOD)情况。尽管先前大多数自动驾驶领域的OOD检测研究集中于计算机视觉任务(如目标检测与分割),轨迹层级的OOD检测仍存在较大探索空间。近期研究将该问题构建为最快变化检测(QCD)任务,并为检测延迟与误报间的权衡提供了形式化保证[1]。基于此基础,我们提出一种引入自适应机制的新框架,以实现复杂驾驶环境中的鲁棒检测。通过对多个真实世界数据集的实证分析发现,预测误差——即使在分布内样本上——呈现随时间演进的模态依赖分布特性,且其动态规律具有数据集特异性。通过显式建模这些误差模态,我们的方法在检测延迟与误报率方面均取得显著提升。在成熟轨迹预测基准上的综合实验表明,本框架在精度与计算效率上均显著优于先前基于不确定性量化及视觉的OOD方法,为构建可靠且具驾驶环境感知能力的自动驾驶系统提供了可行路径。