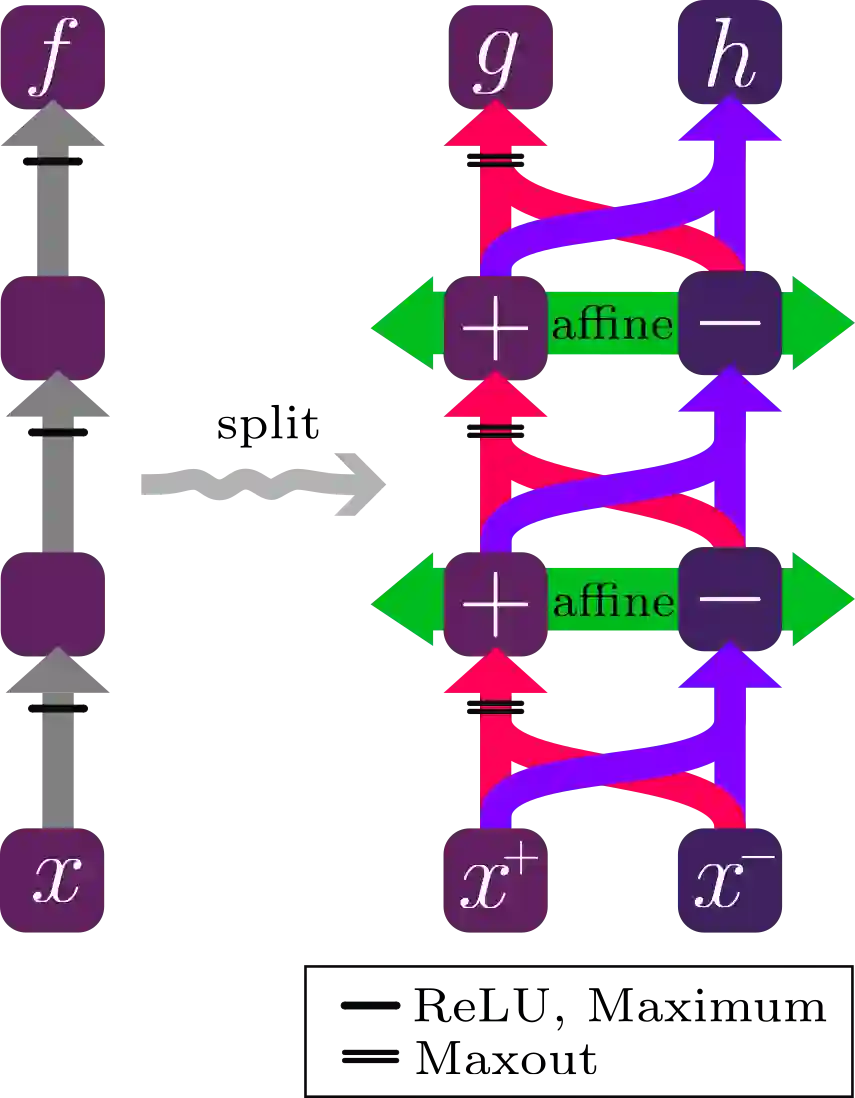

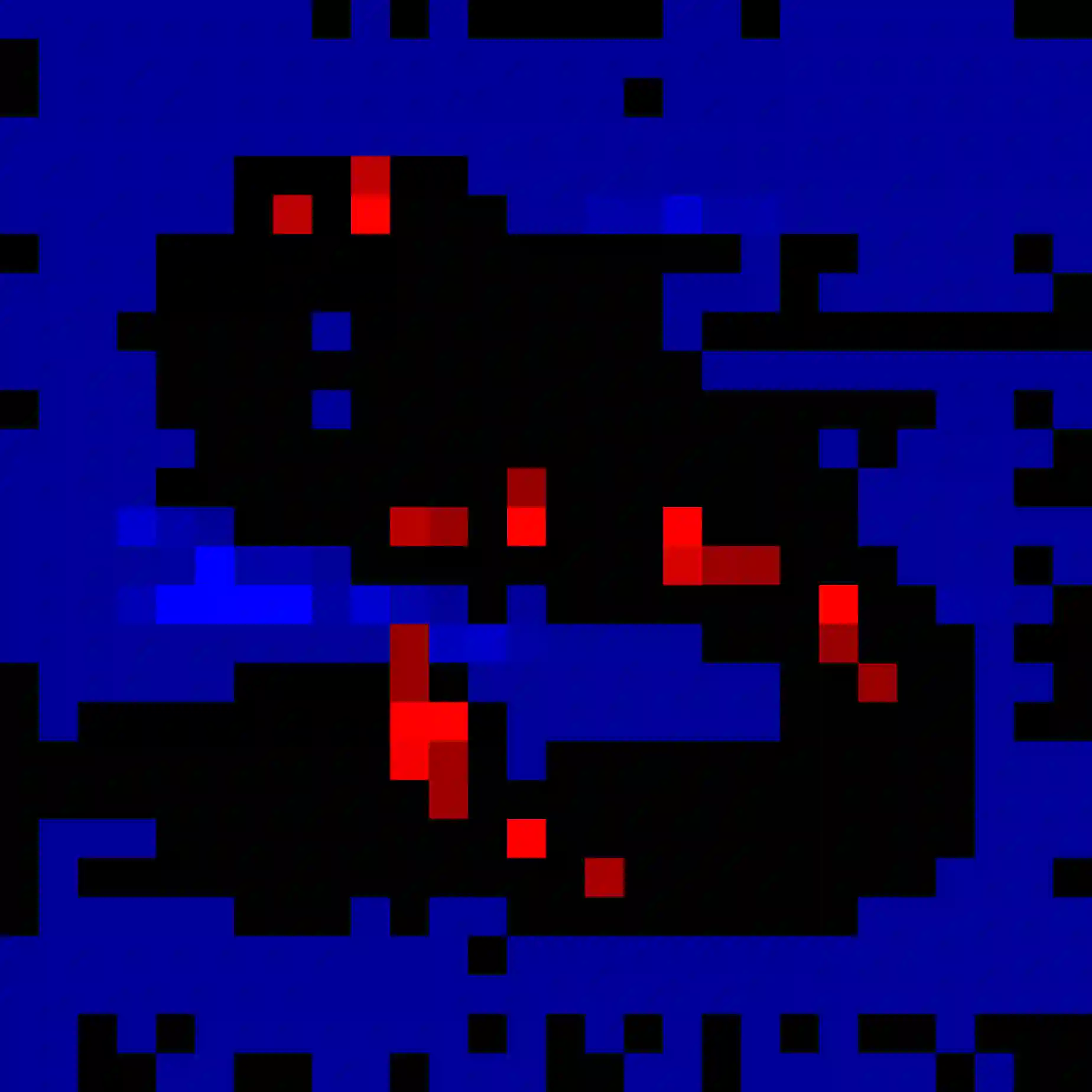

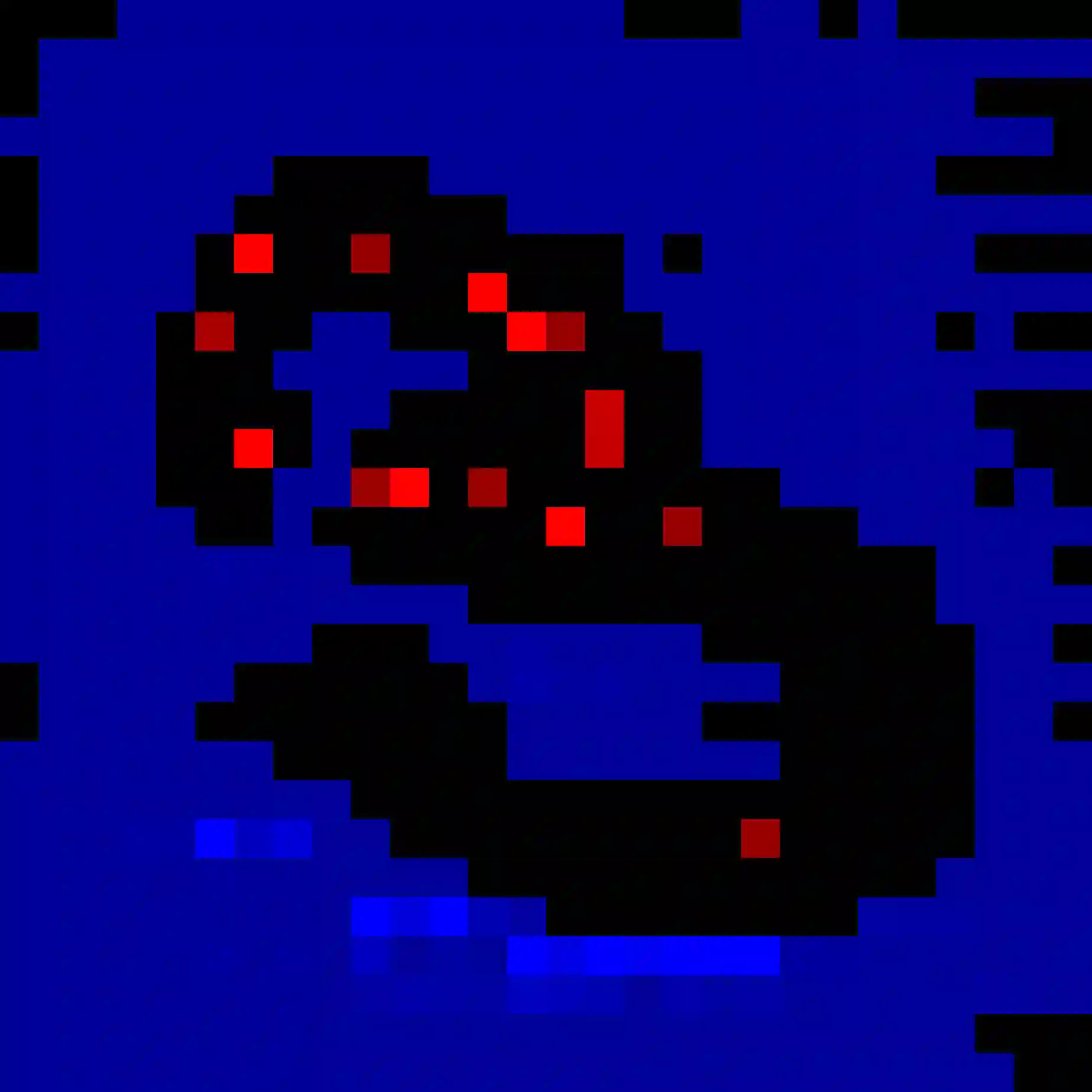

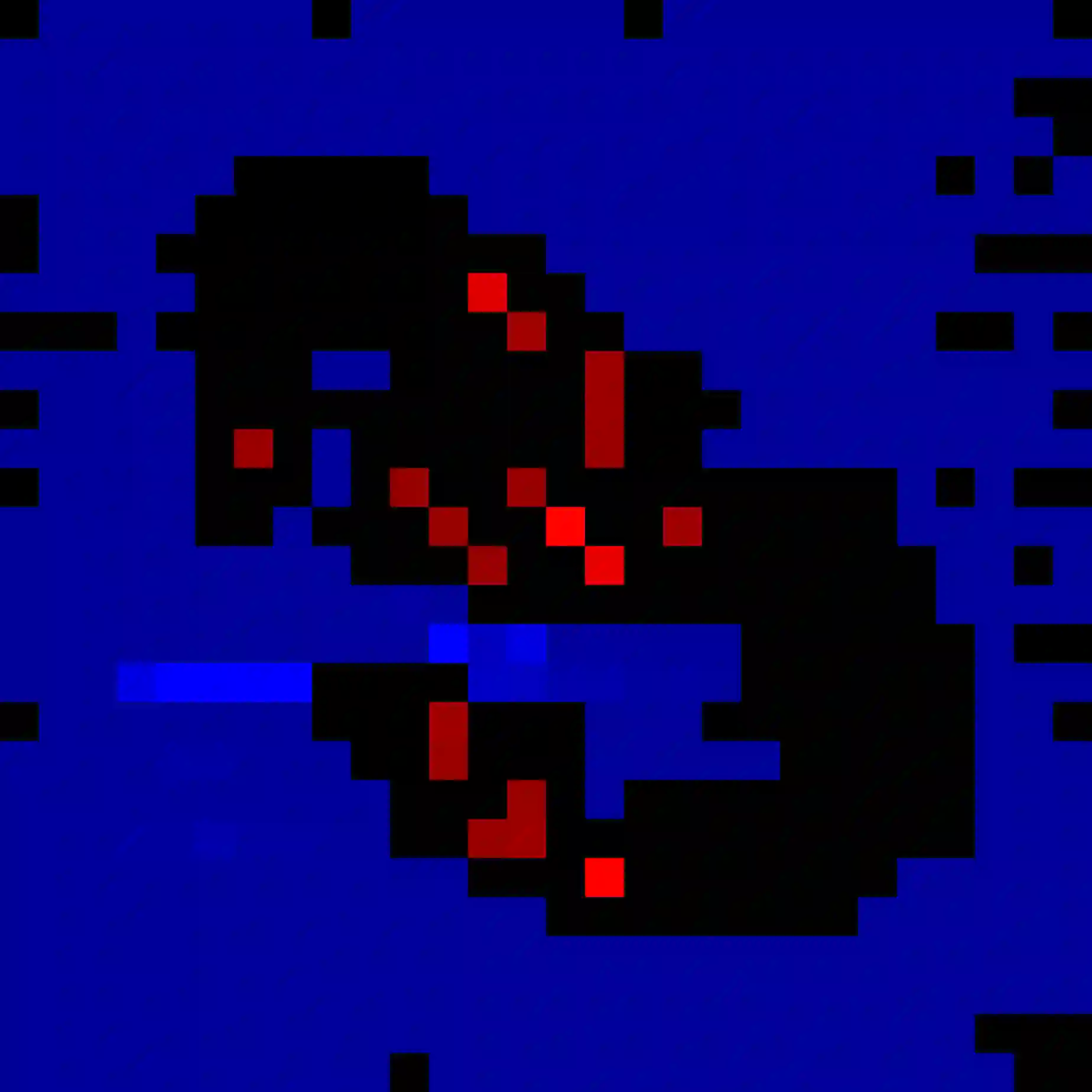

It has been demonstrated in various contexts that monotonicity leads to better explainability in neural networks. However, not every function can be well approximated by a monotone neural network. We demonstrate that monotonicity can still be used in two ways to boost explainability. First, we use an adaptation of the decomposition of a trained ReLU network into two monotone and convex parts, thereby overcoming numerical obstacles from an inherent blowup of the weights in this procedure. Our proposed saliency methods -- SplitCAM and SplitLRP -- improve on state of the art results on both VGG16 and Resnet18 networks on ImageNet-S across all Quantus saliency metric categories. Second, we exhibit that training a model as the difference between two monotone neural networks results in a system with strong self-explainability properties.

翻译:已有研究表明,在多种情境下,单调性能提升神经网络的可解释性。然而,并非所有函数都能通过单调神经网络得到良好近似。我们证明,单调性仍可通过两种方式增强可解释性。首先,我们采用一种改进方法,将训练好的ReLU网络分解为两个单调且凸的部分,从而克服了该过程中因权重固有膨胀而产生的数值障碍。我们提出的显著性方法——SplitCAM与SplitLRP——在ImageNet-S数据集上,针对VGG16和Resnet18网络的所有Quantus显著性指标类别,均超越了当前最优结果。其次,我们证明,将模型训练为两个单调神经网络之差,可获得具有强自解释特性的系统。