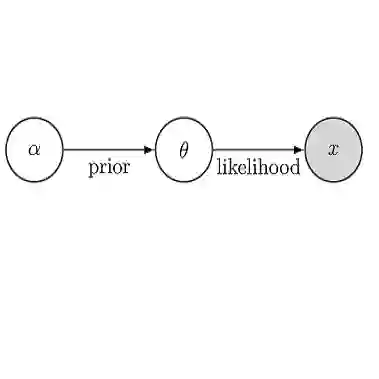

Stein variational inference (SVI) is a sample-based approximate Bayesian inference technique that generates a sample set by jointly optimizing the samples' locations to minimize an information-theoretic measure of discrepancy with the target probability distribution. SVI thus provides a fast and significantly more sample-efficient approach to Bayesian inference than traditional (random-sampling-based) alternatives. However, the optimization techniques employed in existing SVI methods struggle to address problems in which the target distribution is high-dimensional, poorly-conditioned, or non-convex, which severely limits the range of their practical applicability. In this paper, we propose a novel trust-region optimization approach for SVI that successfully addresses each of these challenges. Our method builds upon prior work in SVI by leveraging conditional independences in the target distribution (to achieve high-dimensional scaling) and second-order information (to address poor conditioning), while additionally providing an effective adaptive step control procedure, which is essential for ensuring convergence on challenging non-convex optimization problems. Experimental results show our method achieves superior numerical performance, both in convergence rate and sample accuracy, and scales better in high-dimensional distributions, than previous SVI techniques.

翻译:斯坦变分推断(SVI)是一种基于样本的近似贝叶斯推断技术,它通过联合优化样本位置以最小化与目标概率分布之间信息论差异度量来生成样本集。因此,与传统(基于随机采样的)替代方法相比,SVI为贝叶斯推断提供了一种更快且样本效率显著更高的途径。然而,现有SVI方法所采用的优化技术难以处理目标分布具有高维、病态或非凸特性的问题,这严重限制了其实际应用范围。本文提出一种新颖的置信域优化方法用于SVI,成功解决了上述各项挑战。我们的方法基于先前SVI研究,通过利用目标分布中的条件独立性(以实现高维扩展)和二阶信息(以处理病态条件),同时提供有效的自适应步长控制流程——这对于确保在具有挑战性的非凸优化问题上实现收敛至关重要。实验结果表明,与先前SVI技术相比,我们的方法在收敛速度和样本精度方面均实现了更优异的数值性能,并在高维分布中展现出更好的可扩展性。