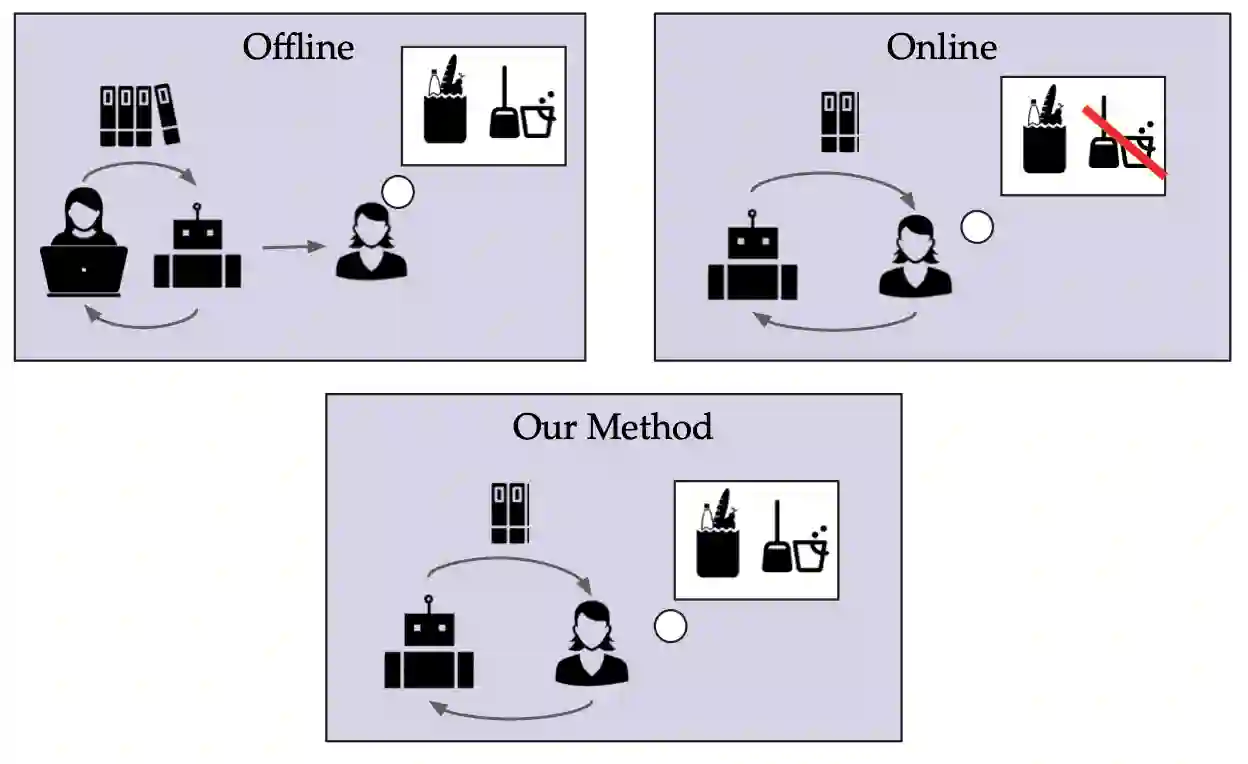

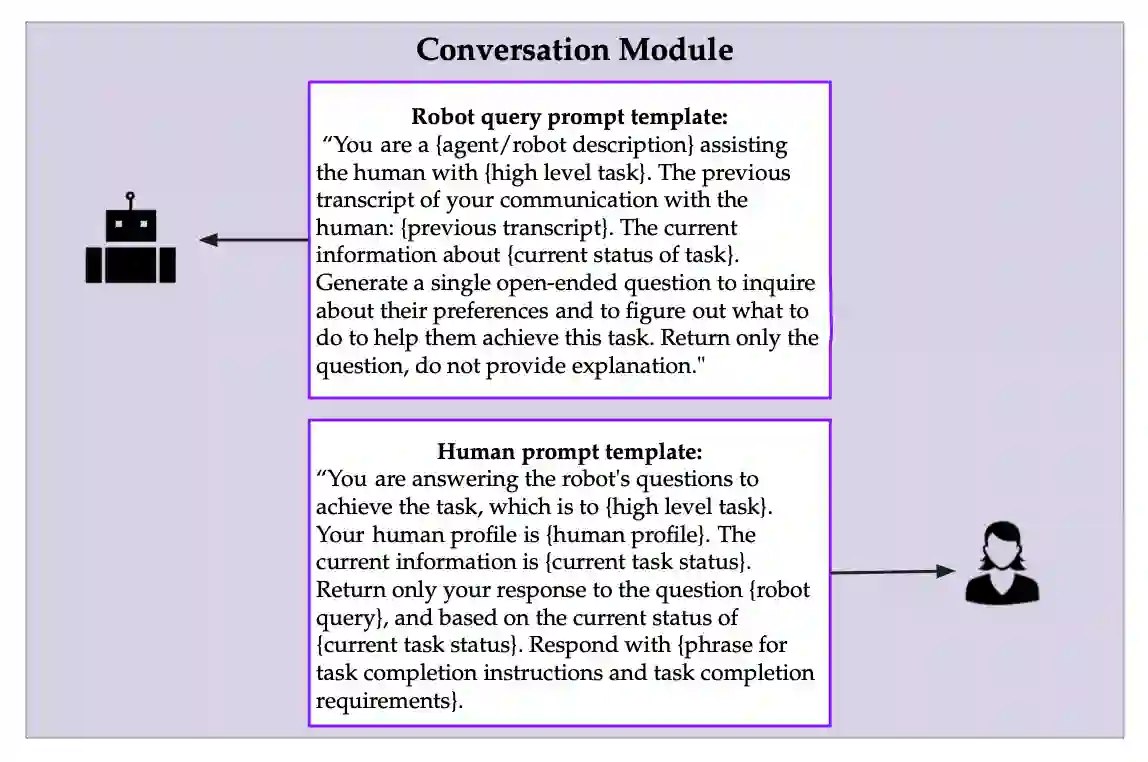

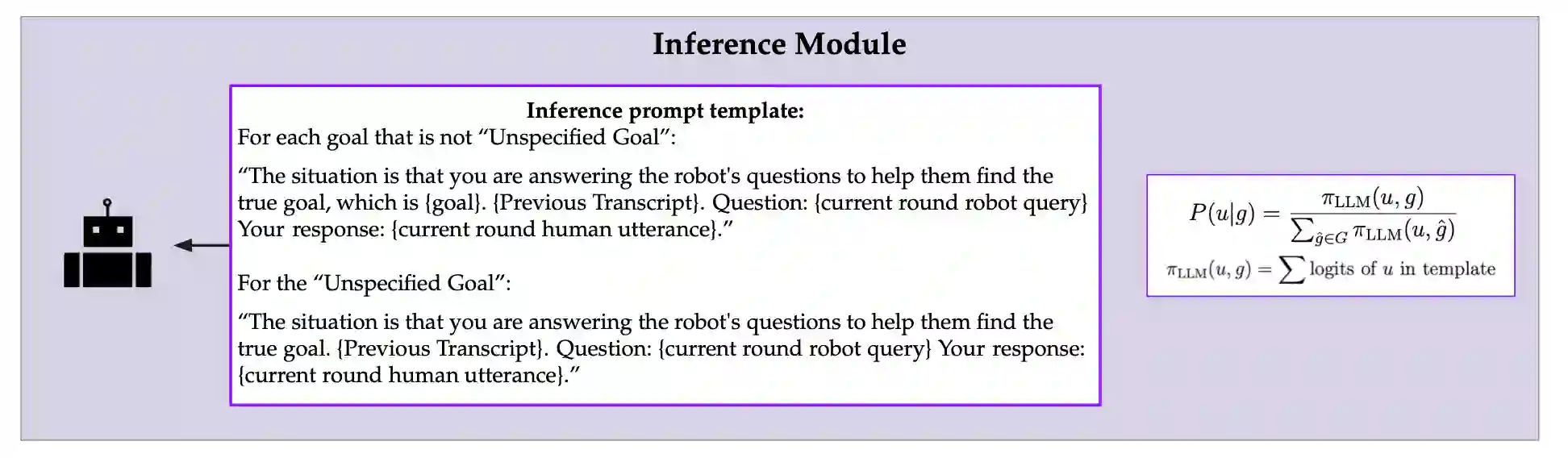

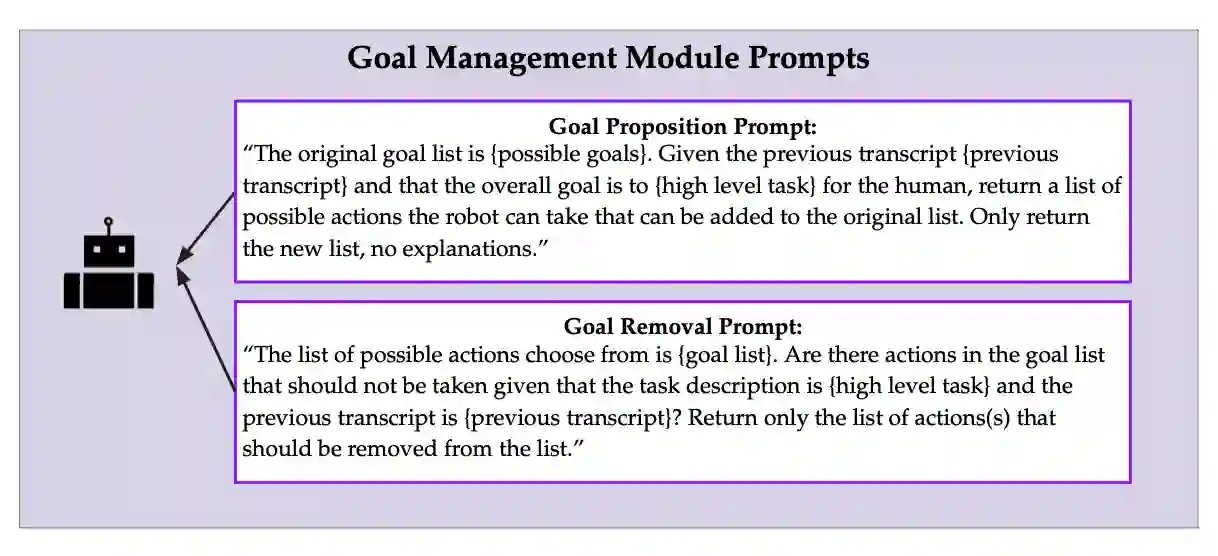

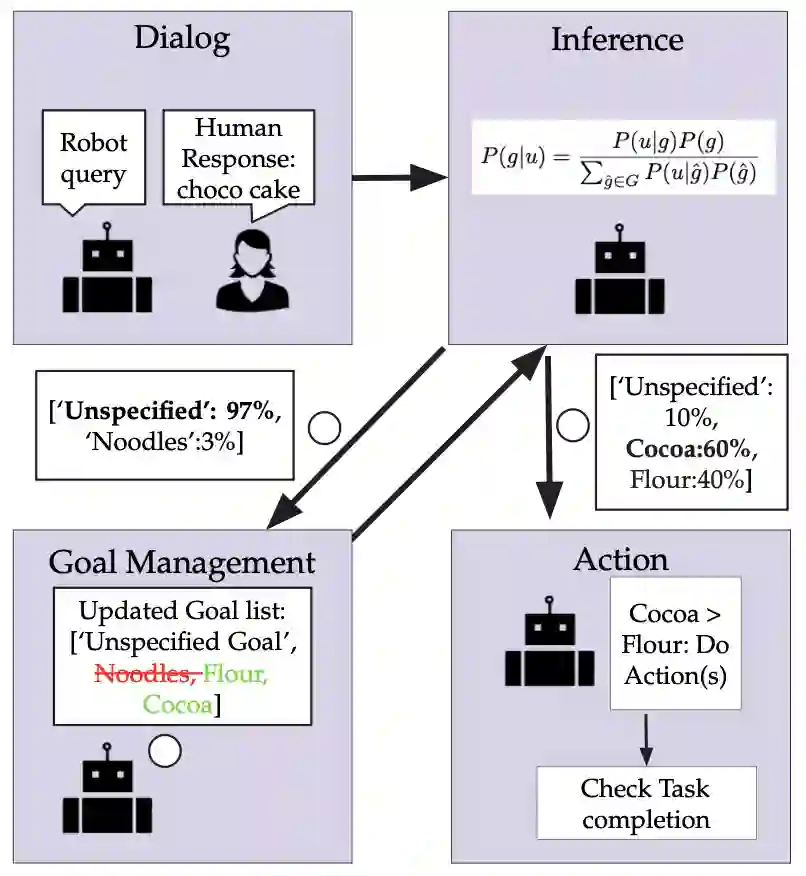

Embodied AI Agents are quickly becoming important and common tools in society. These embodied agents should be able to learn about and accomplish a wide range of user goals and preferences efficiently and robustly. Large Language Models (LLMs) are often used as they allow for opportunities for rich and open-ended dialog type interaction between the human and agent to accomplish tasks according to human preferences. In this thesis, we argue that for embodied agents that deal with open-ended dialog during task assistance: 1) AI Agents should extract goals from conversations in the form of Natural Language (NL) to be better at capturing human preferences as it is intuitive for humans to communicate their preferences on tasks to agents through natural language. 2) AI Agents should quantify/maintain uncertainty about these goals to ensure that actions are being taken according to goals that the agent is extremely certain about. We present an online method for embodied agents to learn and accomplish diverse user goals. While offline methods like RLHF can represent various goals but require large datasets, our approach achieves similar flexibility with online efficiency. We extract natural language goal representations from conversations with Large Language Models (LLMs). We prompt an LLM to role play as a human with different goals and use the corresponding likelihoods to run Bayesian inference over potential goals. As a result, our method can represent uncertainty over complex goals based on unrestricted dialog. We evaluate in a text-based grocery shopping domain and an AI2Thor robot simulation. We compare our method to ablation baselines that lack either explicit goal representation or probabilistic inference.

翻译:具身人工智能代理正迅速成为社会中重要且普遍的工具。这些具身代理应能高效且稳健地学习和完成用户广泛的目标与偏好。大型语言模型常被采用,因其支持人与代理之间通过丰富开放的对话式交互,根据人类偏好完成任务。本论文主张,对于在任务协助中处理开放式对话的具身代理:1)人工智能代理应以自然语言形式从对话中提取目标,以更好地捕捉人类偏好,因为人类通过自然语言向代理传达任务偏好是直观的。2)人工智能代理应对这些目标进行量化/维持不确定性,以确保行动仅基于代理高度确信的目标执行。我们提出了一种在线方法,使具身代理能够学习并完成多样化的用户目标。尽管离线方法(如RLHF)能表征多种目标但需要大规模数据集,我们的方法以在线效率实现了类似的灵活性。我们利用大型语言模型从对话中提取自然语言目标表征。我们提示大型语言模型扮演具有不同目标的人类角色,并利用相应的似然度对潜在目标进行贝叶斯推断。因此,我们的方法能够基于无限制对话表征复杂目标的不确定性。我们在基于文本的杂货购物领域和AI2Thor机器人仿真中评估了该方法,并与缺乏显式目标表征或概率推断的消融基线进行了比较。