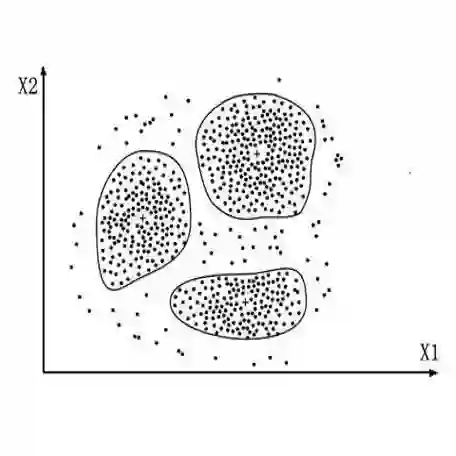

Text clustering is an important method for organising the increasing volume of digital content, aiding in the structuring and discovery of hidden patterns in uncategorised data. The effectiveness of text clustering largely depends on the selection of textual embeddings and clustering algorithms. This study argues that recent advancements in large language models (LLMs) have the potential to enhance this task. The research investigates how different textual embeddings, particularly those utilised in LLMs, and various clustering algorithms influence the clustering of text datasets. A series of experiments were conducted to evaluate the impact of embeddings on clustering results, the role of dimensionality reduction through summarisation, and the adjustment of model size. The findings indicate that LLM embeddings are superior at capturing subtleties in structured language. OpenAI's GPT-3.5 Turbo model yields better results in three out of five clustering metrics across most tested datasets. Most LLM embeddings show improvements in cluster purity and provide a more informative silhouette score, reflecting a refined structural understanding of text data compared to traditional methods. Among the more lightweight models, BERT demonstrates leading performance. Additionally, it was observed that increasing model dimensionality and employing summarisation techniques do not consistently enhance clustering efficiency, suggesting that these strategies require careful consideration for practical application. These results highlight a complex balance between the need for refined text representation and computational feasibility in text clustering applications. This study extends traditional text clustering frameworks by integrating embeddings from LLMs, offering improved methodologies and suggesting new avenues for future research in various types of textual analysis.

翻译:文本聚类是组织日益增长的数字内容的重要方法,有助于对未分类数据进行结构化处理并发现其中的隐藏模式。文本聚类的效果在很大程度上取决于文本嵌入和聚类算法的选择。本研究认为,大语言模型(LLMs)的最新进展有望提升该任务的性能。本研究探讨了不同的文本嵌入(特别是LLMs中使用的嵌入)以及各种聚类算法如何影响文本数据集的聚类效果。通过一系列实验,我们评估了嵌入对聚类结果的影响、通过摘要进行降维的作用以及模型尺寸的调整。研究结果表明,LLM嵌入在捕捉结构化语言的细微差别方面表现更优。在大多数测试数据集上,OpenAI的GPT-3.5 Turbo模型在五项聚类指标中的三项上取得了更好的结果。与传统方法相比,大多数LLM嵌入在聚类纯度方面有所提升,并提供了更具信息量的轮廓系数,这反映了对文本数据更精细的结构理解。在更轻量级的模型中,BERT表现出领先的性能。此外,研究发现增加模型维度和采用摘要技术并不能持续提升聚类效率,这表明这些策略在实际应用中需要谨慎考虑。这些结果突显了文本聚类应用中精细文本表示需求与计算可行性之间的复杂平衡。本研究通过整合LLMs的嵌入扩展了传统的文本聚类框架,提供了改进的方法论,并为未来各类文本分析研究指出了新的方向。